Palantir and Nvidia’s Sovereign AI: What It Means for the Future of Enterprise AI

Artificial intelligence is rapidly becoming one of the most strategic technologies in the world. Governments are investing billions into AI infrastructure to improve national security, manage public services, and maintain economic competitiveness.

Recently, Palantir and Nvidia announced a partnership to deploy what they call “Sovereign AI.” The platform combines Palantir’s enterprise software stack with Nvidia’s powerful AI computing infrastructure to help governments deploy large-scale artificial intelligence systems.

At first glance, the announcement appears to focus on national governments, defense agencies, and critical infrastructure.

But the concept behind sovereign AI is much bigger than government technology.

It signals a shift that will reshape how enterprises build and deploy AI systems inside their own organizations.

What Is Sovereign AI?

Sovereign AI refers to artificial intelligence systems that operate under the full control of the organization or nation using them.

Instead of sending data to external AI providers, sovereign AI systems allow organizations to:

Run AI models on their own infrastructure

Keep sensitive data inside controlled environments

Maintain governance and compliance over how AI operates

Integrate AI directly with internal systems and workflows

For governments, this approach protects national security and critical infrastructure.

For enterprises, it solves a different but equally important problem: data control.

Companies increasingly want to use AI without exposing sensitive internal information to external platforms.

The Palantir–Nvidia AI Stack

The partnership between Palantir and Nvidia combines two key layers required to deploy large-scale AI systems.

Palantir provides the software platform, while Nvidia supplies the AI computing infrastructure.

Palantir’s AI OS Reference Architecture includes several components:

AIP (Artificial Intelligence Platform) – integrates and deploys AI models

Foundry – organizes and analyzes enterprise data

Apollo – manages software deployment across complex environments

Rubix – provides governance and security controls

AIP Hub – manages AI applications

Nvidia contributes the computing layer needed to power these systems.

This includes:

High-performance AI GPUs

AI supercomputing clusters

High-speed networking infrastructure

AI software optimization tools

Together, these technologies create a complete AI operating system for complex organizations.

Why Sovereign AI Matters for Enterprises

While the initial focus of sovereign AI is government systems, the underlying trend is spreading quickly across the private sector.

Organizations are beginning to realize that AI adoption requires control over data, infrastructure, and governance.

Three major shifts are driving this trend.

Data Security and Privacy

Companies store vast amounts of sensitive information, including financial records, customer data, intellectual property, and operational documents.

Many organizations are hesitant to send this information to external AI platforms.

Sovereign-style AI architectures allow companies to run AI within their own secure environments.

Regulatory and Compliance Pressure

Industries like finance, healthcare, and government contracting face strict compliance requirements.

Organizations must track:

who accesses data

how information is used

how decisions are made

AI systems operating within controlled environments make it easier to meet these regulatory standards.

Integration With Internal Knowledge

The most valuable AI applications often rely on internal knowledge, not public data.

Examples include:

company policies

operational procedures

engineering documentation

financial models

compliance guidelines

For AI to be useful inside organizations, it must connect directly to these internal knowledge sources.

Sovereign AI vs Cloud AI

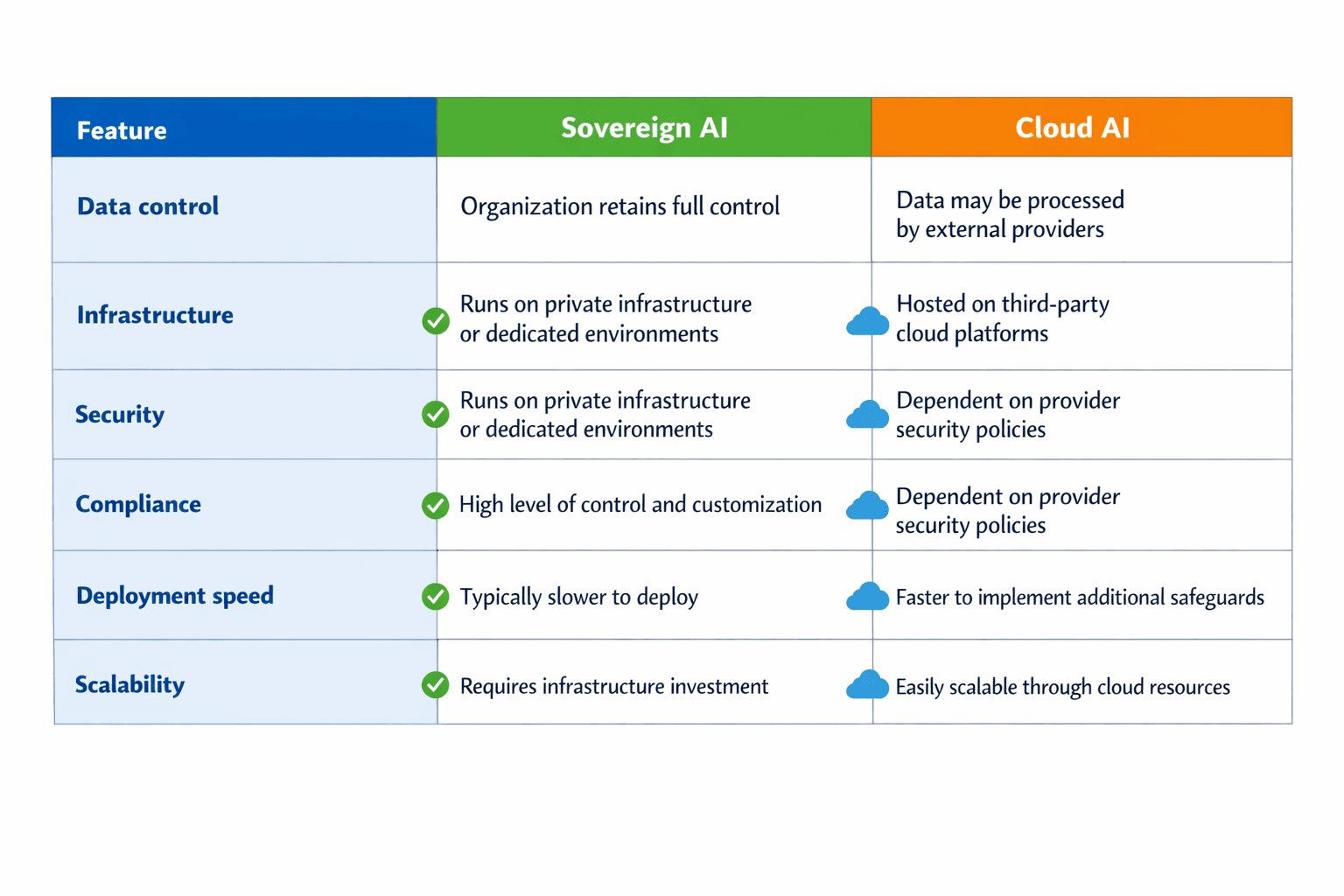

Many organizations today rely on cloud-based AI platforms to access machine learning models and generative AI capabilities. These services are powerful and easy to deploy, but they come with trade-offs related to data control, governance, and long-term infrastructure strategy.

Sovereign AI offers an alternative approach.

Instead of sending sensitive data to external platforms, organizations deploy AI systems within their own infrastructure or private cloud environments.

Below is a simplified comparison.

Many organizations adopt a hybrid strategy, using cloud AI for general tasks while deploying sovereign-style AI systems for sensitive data and mission-critical operations.

Examples of Sovereign AI in Enterprise

While the term “sovereign AI” is often associated with governments, many enterprises are already implementing similar architectures.

Large organizations across multiple industries are building AI systems that operate within their own infrastructure and data environments.

Here are several common examples.

Financial Services

Banks and financial institutions process highly sensitive data and must comply with strict regulations. Many institutions deploy private AI systems to analyze risk models, detect fraud, and assist with underwriting decisions without exposing financial data externally.

Healthcare Systems

Healthcare providers use AI to analyze patient data, medical imaging, and clinical documentation. Due to privacy regulations, many hospitals run AI models within secure internal environments.

Manufacturing and Industrial Operations

Manufacturers deploy AI to monitor production systems, optimize supply chains, and predict equipment failures. These systems often run within internal networks connected to operational technology.

Government Contractors

Organizations working with defense and intelligence agencies frequently operate AI systems inside classified or restricted networks to maintain security and compliance.

In each of these cases, the goal is the same: use AI while maintaining full control over sensitive information.

How Companies Can Build Sovereign AI Systems

Building a sovereign-style AI system does not necessarily require creating an entire national AI infrastructure. Many enterprises are already taking steps toward this model using existing technology.

Most enterprise AI architectures include three key components.

1. Data Infrastructure

The first step is organizing enterprise data so it can be accessed and understood by AI systems.

This may include:

document repositories

data warehouses

knowledge bases

internal databases

Without structured access to data, AI systems cannot provide reliable insights.

2. AI Models and Processing

Organizations then deploy AI models that can analyze internal data and generate responses or predictions.

These models may run on:

private cloud environments

dedicated AI servers

high-performance computing clusters

Some organizations also fine-tune models using their own proprietary datasets.

3. AI Applications and Interfaces

The final layer is the application layer, where employees and customers actually interact with AI systems. This is the visible part of the AI stack—the tools, interfaces, and workflows where AI delivers value to the organization. While the data and model layers power the intelligence behind the scenes, the application layer is where that intelligence becomes useful in daily business operations.

Common interfaces in this layer include:

AI assistants for internal knowledge

These assistants allow employees to ask questions and instantly retrieve answers from company documents, policies, procedures, and internal systems. Instead of searching through shared drives or knowledge bases, teams can ask natural language questions and receive structured answers with sources.

Automated analytics dashboards

AI-powered dashboards go beyond static reporting. They can summarize trends, highlight anomalies, generate insights automatically, and answer follow-up questions about business metrics. This allows executives and teams to move from raw data to decision-making much faster.

Workflow automation tools

AI can automate routine operational tasks such as document processing, approvals, compliance checks, and internal requests. By integrating with existing systems, these tools help reduce manual work, improve consistency, and accelerate business processes.

Customer support AI systems

Customer-facing AI tools handle common inquiries, guide users through troubleshooting, and assist support teams with suggested responses. These systems help organizations respond faster while maintaining consistent service quality across large volumes of requests.

This layer is where AI ultimately becomes embedded into everyday business operations, supporting employees, improving customer experiences, and helping organizations operate more efficiently.

The Rise of Enterprise AI Infrastructure

The Palantir–Nvidia partnership highlights a broader shift in the AI industry.

Instead of relying exclusively on external AI services, organizations are increasingly building their own AI infrastructure stacks.

These stacks typically include three layers:

Data Layer

Systems that organize enterprise data.

AI Model Layer

Machine learning models that analyze enterprise data.

Application Layer

Interfaces that allow employees to interact with AI systems.

This layered architecture mirrors how governments are deploying sovereign AI platforms.

Enterprises are simply applying the same principles within their organizations.

Nvidia’s Strategic Role in the AI Economy

Nvidia plays a central role in this transformation.

The company’s GPUs power the vast majority of modern AI training and inference workloads.

As more organizations deploy sovereign-style AI infrastructure, demand for high-performance computing will continue to grow.

Nvidia is also investing heavily in open-weight AI models designed to run efficiently on its hardware.

One example is the Nemotron family of AI models, which are optimized to maximize performance on Nvidia GPUs.

This strategy ensures organizations building private AI systems naturally rely on Nvidia infrastructure.

What This Means for the Future of Enterprise AI

The concept of sovereign AI reflects a broader shift in how organizations are deploying artificial intelligence. In the early stages of AI adoption, most companies relied heavily on centralized cloud platforms to access AI capabilities. That approach allowed businesses to move quickly, but it also meant relying on external providers for infrastructure, data processing, and model access.

As AI becomes more deeply integrated into core operations, many organizations are seeking greater control over how their systems operate. They want stronger governance over AI models, tighter integration with internal workflows, and secure environments where sensitive data remains protected. This shift is pushing enterprises toward private AI systems built around their own knowledge, infrastructure, and security requirements—a trend highlighted by large initiatives such as the partnership between Palantir and Nvidia.

FAQ

What is sovereign AI?

Sovereign AI refers to artificial intelligence systems that operate under the control of the organization or government using them, ensuring full ownership of data and infrastructure.

Why is sovereign AI important for enterprises?

Enterprises want to maintain control over sensitive data while still benefiting from AI capabilities.

What role do Palantir and Nvidia play?

Palantir provides AI software platforms, while Nvidia provides the computing infrastructure needed to run AI models.

Will enterprises adopt sovereign AI?

Many organizations are already moving toward private AI systems to maintain security, compliance, and data control.